AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Please note that Mac support is experimental due to the unstable nature of the OpenCL drivers in Mac, that is, users running MDT with the GPU as selected device may experience crashes. Open a terminal and type: pip install mdt.Open an Anaconda shell and type: pip install mdt.The installation on Windows is a little bit more complex and the following is only a quick reference guide.įor complete instructions please view the complete documentation. for containerized deployment on a CPU cluster).įor example, to install using Docker use docker build -f containers/Dockerfile.intel. These dockers come with Intel OpenCL drivers pre-loaded (e.g. An alternative is to use pip3 install nibabel instead.Ī Dockerfile and Singularity recipe were kindly provided by Ali Khan (on github: akhanf). Note that python3-nibabel may need NeuroDebian to be available on your machine. sudo apt-get install python3 python3-pip python3-pyopencl python3-numpy python3-nibabel python3-pyqt5 python3-matplotlib python3-yaml python3-argcomplete libpng-dev libfreetype6-dev libxft-dev.sudo apt-get install python3-mdt python3-pipįor Debian users and Ubuntu sudo add-apt-repository ppa:robbert-harms/cbclab.OpenCL 1.2 (or higher) support in GPU driver or CPU runtime.To run, after installing MDT, go to the folder where you downloaded your (pre-processed) HCP data (MGH or WuMinn) and execute:Īnd it will autodetect the study in use and fit your selected model to all the subjects. MDT comes pre-installed with Human Connectome Project (HCP) compatible pipelines for the MGH and the WuMinn 3T studies. Runs on Intel, Nvidia and AMD GPU's and CPU's.Runs on Windows, Mac and Linux operating systems.Free Open Source Software: LGPL v3 license.Computations are parallelized over voxels and over volumes.Offers Graphical, command line and python interfaces.Supports volume weighted objective function.Supports gradient deviations per voxel and per voxel per volume.Includes multiple (adaptive) MCMC sampling algorithms.Includes Powell, Levenberg-Marquardt and Nelder-Mead Simplex optimization routines.Includes Gaussian, Offset-Gaussian and Rician likelihood models.Includes CHARMED, NODDI, BinghamNODDI, NODDIDA, NODDI-DTI, ActiveAx, AxCaliber, Ball&Sticks, Ball&Rackets, Kurtosis, Tensor, VERDICT, qMT, and relaxometry (T1, T2) models.

Human Connectome Project (HCP) pipelines.MDT combines flexible modeling with fast processing, targeting both model developers and data analysts. The aim of MDT is to provide reproducible and comparable model fitting for MRI microstructure analysis.Īs such, we provide a common platform for microstructure modeling including many models that can all be processed using the same optimization routines.įor maximum performance all models and algorithms were implemented to make use of all parallel processing capabilities of modern computers. The Microstructure Diffusion Toolbox (MDT) is a framework and library for microstructure modeling of magnetic resonance imaging (MRI) data.

0 Comments

Read More

Back to Blog

John hoffmann xrg wsj law2/1/2024

William Doar testified that he was retained by Long when Hoffman's trial was transferred from Horry County to Georgetown County. The following evidence was adduced at the hearing held before the magistrate.

A jury found Hoffman guilty as an accessory before the fact to murder and he was sentenced to life in prison. Throughout the trial, the prosecutor continually and repeatedly made reference to Long's representation of Moose and Danielson, as well as Hoffman. He did, however, testify that he was drinking heavily during this period. Hoffman denied the telephone conversation with Moose in which he allegedly told Moose to keep looking for a killer. Hoffman testified that he learned of the murder sometime in May 1979, when Moose demanded payment for the paid killer, claiming that Moose and Hoffman would be the next victims if payment were not made. Hoffman testified that after initially encouraging Moose to look for someone to kill Lowry, in late February or early March of 1979, he told Moose the plan was off and took back the money he had given Moose to pay a killer. Hoffman took the stand in his own behalf and testified that in late November 1978, when he told Moose about Roy Lowry and Hoffman's wife, Moose had first offered to kill Lowry himself. William Doar conducted the cross-examination of Moose. Moose claimed that Hoffman had proposed the idea and later in a telephone conversation encouraged him to continue the search for a trigger man. He said he acted at Hoffman's instigation. He testified that he hired Johnny Hamilton to kill Roy Lowry because "somebody had raped" his sister, Hoffman's wife. Moose was the key prosecution witness against Hoffman.

Back to Blog

The song let it snow lyrics2/1/2024 Also, a scale-down event is initiated when 15 consecutive data points for consumed capacity in CloudWatch are lower than the target utilization. Application Auto Scaling automatically scales the provisioned capacity only when the consumed capacity is higher than target utilization for two consistent minutes. Application Auto Scaling initiates a scale up only when two consecutive data points for consumed capacity units exceed the configured target utilization value within a one-minute span. You activated AWS Application Auto Scaling, but your table is still being throttledĪWS Application Auto Scaling isn't a suitable solution to address sudden spikes in traffic with DynamoDB tables. With the burst capacity feature, DynamoDB reserves a portion of the unused capacity for later bursts of throughput to handle usage spikes. Note: DynamoDB doesn't necessarily start throttling the table after the consumed capacity per second exceeds the provisioned capacity. For more information, see Error retries and exponential backoff. If you are using the AWS SDK, then this logic is implemented by default. To resolve this issue, make sure that your table has enough capacity to serve your traffic and retry throttled requests using exponential backoff. However, if all the workload falls within a couple of seconds, then the requests might be throttled. The total number of read capacity units or write capacity units per minute might be lower than the provisioned throughput for the table. However, driving all 3600 requests in one second with no requests for the rest of that minute might result in throttling. For example, if you provisioned 60 write capacity units for your DynamoDB table, then you can perform 3600 writes in one minute. However, the DynamoDB rate limits are applied per second.

The metrics are calculated as the sum for a minute and then averaged.

Your DynamoDB table has adequate provisioned capacity, but most of the requests are being throttledĭynamoDB reports minute-level metrics to Amazon CloudWatch. Use one or more of the following troubleshooting options based on your use case. These metrics might help you to locate the operations creating throttled requests and identify the cause for throttling. Your table's traffic is exceeding your account throughput quotas.įor information on DynamoDB metrics that must be monitored during throttling events, see DynamoDB metrics and dimensions.You have a hot partition in your table.

Your DynamoDB table is in on-demand capacity mode, but the table is being throttled.You activated AWS Application Auto Scaling for DynamoDB, but your DynamoDB table is being throttled.Your DynamoDB table has adequate provisioned capacity, but most of the requests are being throttled.Here are some of the common throttling issues that you might face:

Back to Blog

Panoply game2/1/2024

Laurie Schneider has demonstrated how careful Exekias was about the composition. The whole gaming scene is divided into three plains by the players’ spears. The middle plain is a triangle pointing downwards. Within it we see Achilles and Ajax’s heads at the top and their hands at the bottom. This effect draws our focus to the players’ faces and also communicates the idea that their heads (that is, their thoughts) are dictating the moves that their hands make. It is praised for the sophistication of its composition and the excellence of its execution. 28, 1976).Įven with all these different versions, many still consider the Exekias vase to be the most artistically impressive. While a huge number of vases were decorated with the Achilles-Ajax gaming scene, it also featured on other items. Shield bands found at the sanctuary at Olympia show the familiar design, and a marble frieze found on the Athenian acropolis also seems to show the same episode. It’s been suggested that the acropolis marble may be the original image that inspired the vase-painters (see D.L Thompson, Arch.Class. This bilingual amphora is housed in the Museum of Fine Arts, Boston. © MFA Boston (01.8037) c.500BCE

It has a black figure scene by the Lysippides Painter on one side (left) and a red-figure scene by the Andokides Painter on the other (right).

The amphora below, made c.530-520BCE, shows the same scene in two styles - making it what's known as a bilingual vase. Hydria by a member of the Leagros Group. © Metropolitan Museum (56.171.29) c.510BCE © Vatican Museum (343). Photo from Schefold, 1992Īthena appears between the players in many of the Achilles-Ajax scenes. The vase below is a hydria by a painter from the influential Leagros Group. Athena was thought to have taken a keen interest in the Greek heroes at Troy and this is reflected in the vase-maker's decision to include her in this scene. Some scholars, such as Karl Schefold (1992, 273-4) have argued that the cup below shows the earliest surviving version of the Achilles-Ajax playing scene. They date it to c.550, making it earlier than the famous version by Exekias. Other scholars, notably Susan Woodford (1982) and Mary Moore (1980) consider that date much too early for that cup. They are amongst a large number of scholars who think that Exekias was the first to present the Achilles-Ajax gaming scene on a vase and that all other versions were based on his work. There is no consensus because so many vases of these vases were produced within a very small window of time, making it difficult to put the vases in chronological order on stylistic grounds. Many vase-painters created versions of the gaming scene around this time. More than a hundred examples survive. © Vatican Museum (344). Photo Steven Zucker. Like the gaming scene on the other side, this is an image of famous figures in a moment of leisure. The vase scene shows her parents, Tyndareos and Leda, and her twin brothers, Castor and Polydeuces (aka 'Pollux’ to the Romans) with a horse. Before she was Helen of Troy, she had once been Helen of Sparta. The reverse of the vase shows the family of Helen of Troy. The vase is an Attic black figure amphora, made c.540-530BCE by the greatest of the black-figure painters, Exekias, who has signed his name on the vase. The vase shows Achilles and Ajax playing a game during the Trojan War. Both men still have their shields, spears, and helmets at the ready.

Back to Blog

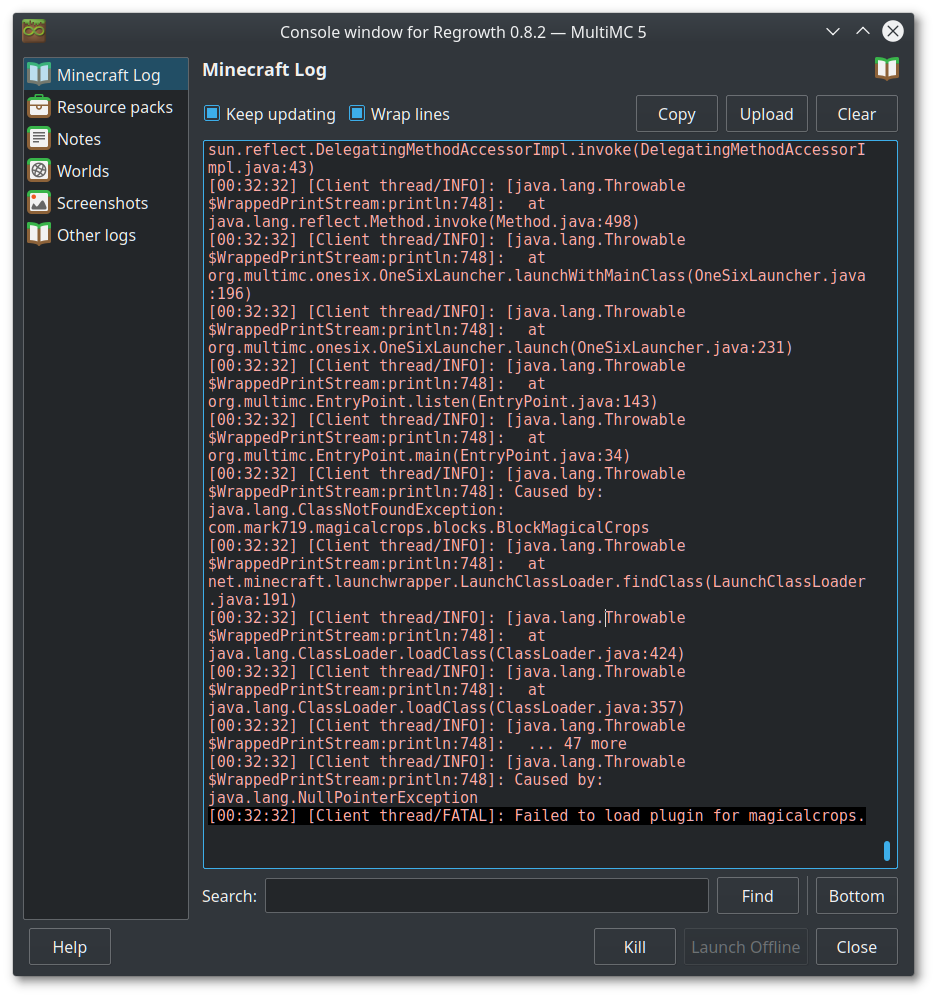

Multimc curseforge2/1/2024

Sadly, MultiMC offers no built-in way of repairing your instances. They are recommended by CPW in this Reddit Post Fixing Modpack Installation These JVM arguments improve performance and Java's garbage collection. XX:+UseG1GC .gcInterval=2147483646 -XX:+UnlockExperimentalVMOptions -XX:G1NewSizePercent=20 -XX:G1ReservePercent=20 -XX:MaxGCPauseMillis=50 -XX:G1HeapRegionSize=32M Right-click your MC Odyssey Instance - Click Edit Instance -> Click Settings -> Tick "Java arguments"Īdd this to the text box below "Java arguments": You will however want at least 10GB to play optimally, in the future allocating more is appreciated. You will NOT need more than 8096MB to play MC Odyssey Lite. Right-click your MC Odyssey Instance -> Click Edit Instance -> Click Settings -> Tick "Memory"Ĭhange the field "Maximum Memory Allocation" to your desired RAM amount in megabytes (1024 x gigabytes) You should be able to download most mods through MultiMC/PolyMC and just move the mods over from Curseforge (or Manually, if you'd like to ditch Curse completely) Allocating More RAM Hit "Add Instance" -> Click "CurseForge" -> Search for "MC Odyssey" -> Click the pack -> Click "Ok" and wait for the pack to finish downloading.ĮDIT: As of right now, this method does not work, you would probably have to use Curseforge to download the pack before moving over to MultiMC, follow the method above for such.

You have installed MultiMC! Adding the Modpack to MultiMC Go to the website, Click "Download & Install", Choose your operating system and download the program.Įxtract the program to where you want it, such as your desktop, although somewhere close to the root of your drive would be better. Select the latest and highest number, (Preferably Java 1.8.0.301 or more, if it isn't available, please update your Java.).Scroll down to Java Version setting under the Java Settings group.Click on the three dots next to the Play button.Set the Arguments to your preferred argument, a preferred one is: -XX:+UseG1GC .gcInterval=2147483646 -XX:+UnlockExperimentalVMOptions -XX:G1NewSizePercent=20 -XX:G1ReservePercent=20 -XX:MaxGCPauseMillis=50 -XX:G1HeapRegionSize=32M as posted by CPW in this Reddit Post.Scroll down to Additional Arguments setting under the Java Settings group.Allocate more ram through the slider noting that the recommended amount of RAM for MC Odyssey is 10GBs while the minimum is 8GBs.Scroll down to Allocated Memory setting under the Java Settings group.Navigate to Minecraft under the Game Specific group.Click the settings cog in the bottom left corner of your Curseforge Launcher.Launcher Settings Curseforge Guide Allocating more RAM |

RSS Feed

RSS Feed